I was in a planning meeting where six engineering teams presented their AI agent strategies.

Six teams. Six different answers.

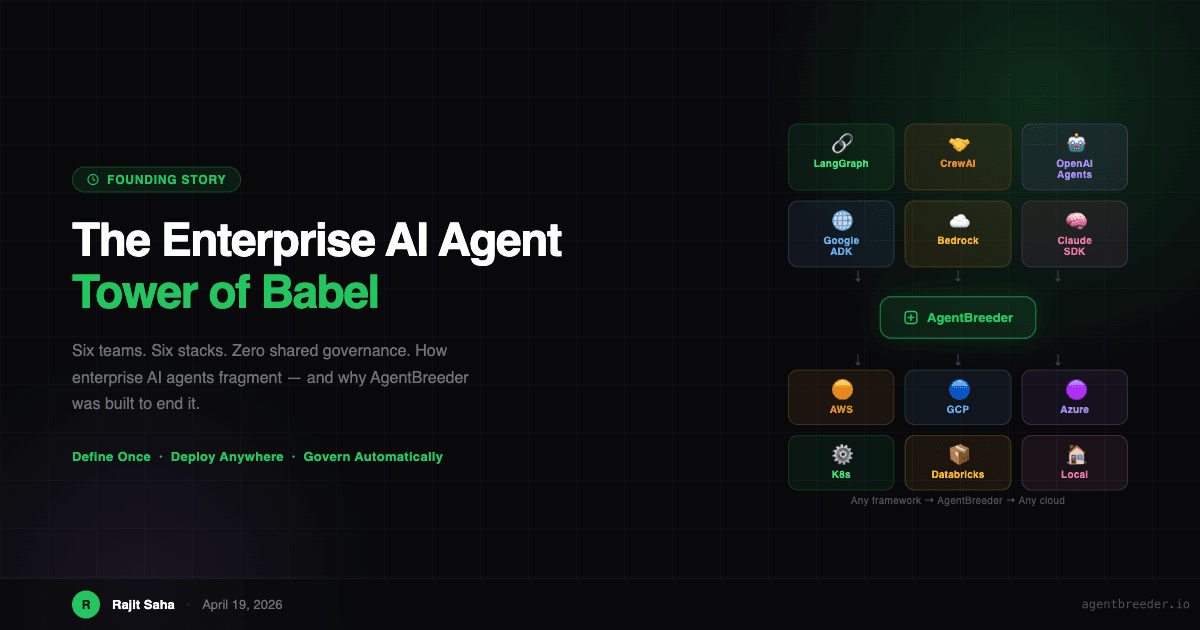

Team 1: "We are on LangGraph, deployed to our own Kubernetes cluster." Team 2: "We are using CrewAI on AWS ECS Fargate." Team 3: "OpenAI Assistants — managed cloud. No ops overhead." Team 4: "Google ADK, because our data pipelines run on BigQuery." Team 5: "Bedrock only. Security signed off on AWS." Team 6: "We wrote our own framework in TypeScript."

Zero shared governance. Zero shared cost tracking. Zero shared observability. Six teams solving the same problems six different ways — all convinced their stack was the correct one.

That was the day I started building AgentBreeder.

The AI Agent Fragmentation Crisis

Enterprise teams are not making irrational choices. Each framework has real strengths:

- LangGraph is excellent for stateful, complex workflows — but you run your own runtime with no built-in API layer

- CrewAI makes multi-agent collaboration intuitive — but it wants Python, and it assumes you share Crew's infrastructure vision

- Google ADK integrates beautifully with Vertex AI and BigQuery — but it ties you to GCP

- AWS Bedrock Agents has enterprise security baked in — but it is AWS-only and proprietary

- OpenAI Assistants is the fastest path to production — but you are locked to OpenAI's platform and pricing

- Claude Managed Agents leverages the best reasoning models — but runtime lives on Anthropic's infrastructure

Every framework is genuinely good at something. And every framework is a walled garden.

The problem is not that these frameworks are bad. The problem is that enterprises have dozens of teams making independent choices, and within six months, you have AI infrastructure that looks less like a platform and more like a museum of competing opinions.

New AI agent frameworks are emerging at roughly twice the pace of foundation model releases. Every month brings a new contender with real strengths and real advocates. Telling your teams to wait for a winner is not a strategy — it is a delay tactic that lets the competition move faster.

The Five Wars Every Enterprise Is Fighting

When I talk to engineering leaders about AI agents, the same five debates surface in every organization. I call them the Five Wars.

War 1: The Framework War

Your ML engineers, who have spent ten to fifteen years working with data, want the framework that best expresses their mental model for agent architecture. Your software engineers want the one that integrates cleanly with their existing microservices. Your DevOps team wants the one with the best Helm chart and the most predictable operational profile.

The result is fragmentation. You end up with LangGraph agents in one cluster, CrewAI agents in another, OpenAI Assistants in a third, and nobody can give you the combined cost, error rate, or token consumption across all of them.

War 2: The Cloud War

Every major cloud provider wants to be the home for your AI agents:

- AWS gives you Bedrock, ECS Fargate, EKS, Lambda, and App Runner

- GCP gives you Vertex AI, ADK, Cloud Run, and GKE

- Azure gives you Azure AI Studio, Container Apps, and AKS

- Databricks wants you to build and run agents inside their Lakehouse platform

- Snowflake has Cortex AI and wants agents inside their data cloud

No enterprise chooses just one. Different business units have existing commitments to different cloud providers — and those commitments are not going away. You are not consolidating to a single cloud. You need agents that work across all of them.

War 3: The Team War

Three completely different groups believe they should own AI agents.

Software engineers argue: "Agents are just microservices with LLM calls. We have the deployment expertise, the CI/CD pipelines, the on-call rotations. We should own this."

ML engineers argue: "The core of an agent is prompt engineering, model selection, RAG architecture, and evaluation. We have spent a decade building expertise in these areas. A software engineer would ship a terrible system prompt."

Business analysts and product managers argue: "We understand the business process this agent is automating. We know the edge cases and the requirements better than any engineer. We should not need to write Python to configure a customer support workflow."

All three are right. All three have legitimate ownership claims. The mistake is framing this as a choice of who builds the agent — when the real answer is that all three should be able to contribute in the way that fits their skills.

War 4: The Language War

Ask any team which language to use for agents and you will get:

- Backend teams: Python or TypeScript, depending on what they already use

- Enterprise Java shops: Kotlin or Java — they are not rewriting their services in Python

- Data engineering teams: Python only, they have never touched Node.js

- Frontend teams: TypeScript exclusively

- Platform engineers: Go, for the tooling

Every existing agent framework effectively requires Python. That excludes the Java team with the deepest domain knowledge for a particular workflow. It frustrates the TypeScript team that wants agents embedded in their existing Node.js services.

War 5: The Governance Black Hole

Here is the silent problem that gets buried under the framework debates: none of these frameworks ship with enterprise governance.

Every production deployment needs:

- RBAC — who can deploy agents, who can invoke them, who can modify them

- Cost attribution — which team, which agent, which model, how much

- Audit trail — who changed what, when, in a durable immutable log

- Secret management — API keys and credentials, not in your repository

Every enterprise ends up building this separately, after the fact, when something goes wrong. Three months in, you discover that one team's agents consumed 80 percent of your OpenAI budget because nobody set spend limits. You have no idea which agent called which tool because there is no centralized trace. The security team asks for an audit log and it does not exist.

Governance should not be an afterthought bolted on after deployment. It should be a structural property of the platform — a side effect of deploying, not a separate project.

How AgentBreeder Compares to Existing Frameworks

Before diving into the architecture, here is how AgentBreeder stacks up against the frameworks your teams are already debating. The key insight: every existing tool is excellent at the layer it was designed for — but none of them solve the coordination problem above that layer.

Framework Comparison

AgentBreeder vs leading agent frameworks — capability by capability

| Capability | ★ ThisAgentBreeder | LangGraph | CrewAI | OpenAI Agents | Google ADK | Claude SDK | Mastra | AWS Bedrock |

|---|---|---|---|---|---|---|---|---|

Framework agnostic Run LangGraph, CrewAI, ADK, Mastra, Claude SDK, or your own code — same pipeline | Yes | No | No | No | No | No | No | No |

Cloud agnostic (AWS + GCP + Azure) Bedrock = AWS only · ADK = GCP preferred · OpenAI = OpenAI cloud · Mastra = runs anywhere | Yes | Partial | Partial | No | No | Partial | Yes | No |

No-code visual builder | Yes | No | No | Partial | No | No | No | Partial |

YAML low-code config | Yes | No | No | No | No | No | No | No |

Full-code SDK | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

Built-in RBAC & governance Governance is a side effect of deploying, not a separate project | Yes | No | No | Partial | No | No | No | Partial |

Cost attribution per team | Yes | No | No | Partial | No | No | No | Partial |

Immutable audit log | Yes | No | No | No | No | No | No | Partial |

Shared org-wide registry Agents, prompts, tools, RAGs, MCPs — discoverable across all teams | Yes | No | No | No | No | No | No | No |

Multi-language (Python + TypeScript) Mastra = TypeScript only · Claude SDK = Python + TS · most others = Python only | Yes | No | No | Partial | No | Partial | No | Partial |

MCP server support | Yes | Partial | Partial | Yes | Partial | Yes | Yes | No |

Open source (Apache 2.0) Claude SDK is MIT-licensed; Claude models are proprietary | Yes | Yes | Yes | No | Yes | Partial | Yes | No |

Why "Just Pick One Framework" Does Not Work

The standard advice from consultants and blog posts is to standardize on one framework. Pick LangGraph. Pick CrewAI. Make a decision and enforce it.

This advice fails for most enterprises because you do not control all of your teams, and your teams do not all have the same requirements.

The team integrating with your customer support CRM has different needs than the team building a financial analysis agent over your data warehouse. The ML research team exploring new model capabilities has different preferences than the backend team adding an agent to an existing API service.

Forcing all of these onto one framework produces one of three outcomes:

- You pick the wrong framework for most teams' use cases, reducing the quality of what gets built

- You lose the engineers who refuse to use the mandated stack — the ones who have the most relevant expertise

- You slow down the entire organization while teams wait for the "approved" framework to catch up to their requirements

The enterprise AI agent problem is not a technology problem. It is a coordination problem — and coordination problems are not solved by mandates. They are solved by platforms.

The AgentBreeder Answer

I built AgentBreeder on a simple premise: agent creation should be completely agnostic of the framework war, the cloud war, the team war, and the language war.

The core insight is this: what every enterprise actually needs is not a better framework. They need a deployment and governance layer that sits above all frameworks — a platform that lets each team choose their preferred tools while automatically providing the shared infrastructure that makes every agent governable, observable, and portable.

Write one agent.yaml. Deploy to any cloud. Govern automatically. Done.

The Five-Layer Architecture

AgentBreeder is built in five layers, each solving one dimension of the fragmentation problem.

AgentBreeder Architecture

Five layers that make agent creation framework-, cloud-, and team-agnostic

Define Once · Deploy Anywhere · Govern Automatically

Layer 1: The Governed Marketplace

Every organization needs a shared registry of reusable agent components. When Team 1 builds a Zendesk integration tool, Team 6 should be able to use it without rebuilding it from scratch. When the ML team crafts a great system prompt for customer intent classification, every team should be able to reference it.

The marketplace contains:

- Agents — versioned deployments, with ownership, access control, and full deploy history

- Prompts — versioned system prompts, shareable and reusable across teams

- Tools and MCP Servers — integrations built once, used everywhere

- RAG Indexes — vector stores, graph databases, shared knowledge bases

- Models — the org-approved model list with provider abstraction and fallback chains

The marketplace creates institutional knowledge — a shared library that compounds value over time. The more teams use AgentBreeder, the more reusable components accumulate, and the faster every team can build.

Layer 2: Three Builder Modes for Three Types of Builders

No-code, low-code, and full-code builders. All three produce the same internal representation and go through the same deploy pipeline.

No-Code — Visual Builder: Business analysts and product managers use a drag-and-drop canvas to wire together agents, tools, RAG sources, and MCP servers. They pick models from the approved list, set access policies from dropdowns, and deploy — without writing a single line of code.

Low-Code — YAML Config: Engineers who want structure without boilerplate write one agent.yaml:

name: customer-support-agent

version: 1.0.0

framework: langgraph

model:

primary: claude-sonnet-4

fallback: gpt-4o

tools:

- ref: tools/zendesk-mcp

- ref: tools/order-lookup

deploy:

cloud: aws

runtime: ecs-fargateThat is the complete configuration for a production-ready LangGraph agent on AWS ECS. RBAC, cost attribution, audit trail, and observability are injected automatically.

Full-Code — SDK: Senior engineers and researchers who want complete programmatic control use the Python or TypeScript SDK. They implement custom routing, state machines, multi-model pipelines, and complex orchestration. The SDK generates the agent.yaml plus bundles their code — it does not bypass the governance engine.

The key property: the governance layer does not care which builder was used. All three paths lead to the same deploy pipeline.

Layer 3: Language Agnostic

AgentBreeder is not a Python-only platform:

| Language | Status |

|---|---|

| Python | Available |

| TypeScript | Available |

| Java | Roadmap |

| Kotlin | Roadmap |

| Go | Roadmap |

Your Java team builds in Java. Your TypeScript team stays in TypeScript. The container build system handles the packaging.

Layer 4: Framework Agnostic

Every major framework is a first-class target:

| Framework | Status |

|---|---|

| LangGraph | Available |

| CrewAI | Available |

| Claude SDK (Anthropic) | Available |

| OpenAI Agents SDK | Available |

| Google ADK | Available |

| Custom / Bring Your Own | Available |

The engine/runtimes layer handles all framework-specific container configuration. Each runtime knows how to package, start, and health-check agents for its framework. The deployer does not know or care which framework runs inside the container.

Layer 5: Runtime Agnostic

One agent.yaml. Any cloud target:

| Cloud | Runtimes |

|---|---|

| AWS | ECS Fargate, App Runner, EKS, Lambda |

| GCP | Cloud Run, GKE |

| Azure | Container Apps, AKS |

| Databricks | MLflow Jobs |

| Kubernetes | Any cluster |

| Local | Docker Compose |

| Claude Managed | Anthropic-hosted |

The deployer layer translates your agent.yaml deploy section into the appropriate cloud-specific infrastructure. Same code, any target.

Governance as a Structural Property, Not a Checklist

This is the principle that I am most proud of in AgentBreeder's design.

Every agentbreeder deploy automatically:

- Validates RBAC — can this user or team deploy to this target?

- Registers the agent in the org-wide registry

- Attributes cost to the deploying team

- Writes an immutable audit log entry

- Injects an OpenTelemetry sidecar for distributed traces, token counts, and error rates

You do not configure governance. You do not add a governance step to your pipeline. There is no governance form to fill out.

Governance is a side effect of deploying — which means it happens every time, for every agent, without any additional work from the team building the agent.

This is the property that top-down governance mandates can never achieve. When the path of least resistance leads to compliance, compliance is what you get. When compliance requires extra steps, people skip the steps.

Who Should Build Agents? Everyone — With Their Preferred Tools

My answer to the team war:

The ML engineer owns the brain. They define the system prompt, select and tune the model, design the RAG architecture, evaluate agent quality, and publish prompts and evaluation results to the shared marketplace. They work in Python and use the Full Code SDK.

The software engineer owns the integrations. They build the tools and MCP servers that connect agents to business systems, design the deployment topology, own the CI/CD pipeline, and maintain the agent.yaml. They work in Python or TypeScript.

The product manager or analyst owns the workflow. They use the visual No-Code builder to wire together existing components from the marketplace, create new agent configurations from approved templates, and test behavior in the playground — without blocking on engineering time for every iteration.

All three contribute. None of them are blocked waiting for the others. The marketplace is the coordination layer — it lets teams share work without sharing workflows.

The Founding Problem, in One Sentence

Every enterprise AI agent stack fragments by design: each framework, each cloud provider, each vendor builds walled gardens that maximize their lock-in rather than your portability.

AgentBreeder is built on the opposite principle.

Define once. Deploy anywhere. Govern automatically.

One agent.yaml. Any framework. Any cloud. Any team. Any language. Full governance, zero extra configuration.

If you are an engineering leader watching your teams fragment across a dozen different agent stacks, AgentBreeder is what you build to end that fragmentation — without taking away the tools your teams already know and prefer.

Try AgentBreeder Today

AgentBreeder is open-source, Apache 2.0 licensed, and available now.

pip install agentbreeder

agentbreeder init

agentbreeder deploy --target localThe frameworks will keep coming. The clouds will keep adding AI services. The team debates will keep happening.

AgentBreeder makes all of it irrelevant.

- Read the getting started guide

- Browse the full

agent.yamlspecification - See migration guides from LangGraph, CrewAI, and more

- Star the repo on GitHub

Rajit Saha is the founder of AgentBreeder. He built this platform after watching enterprise AI agent deployments fragment repeatedly across frameworks, clouds, and teams. AgentBreeder is his answer to the coordination problem at the heart of enterprise AI.