Every enterprise AI agent platform claims to solve "the fragmentation problem." AWS says use Bedrock. Google says use Vertex AI and ADK. Microsoft says use Semantic Kernel. Mastra says write TypeScript. A dozen open-source projects say fork them on GitHub.

They are all solving a real problem. None of them are solving the same problem AgentBreeder solves.

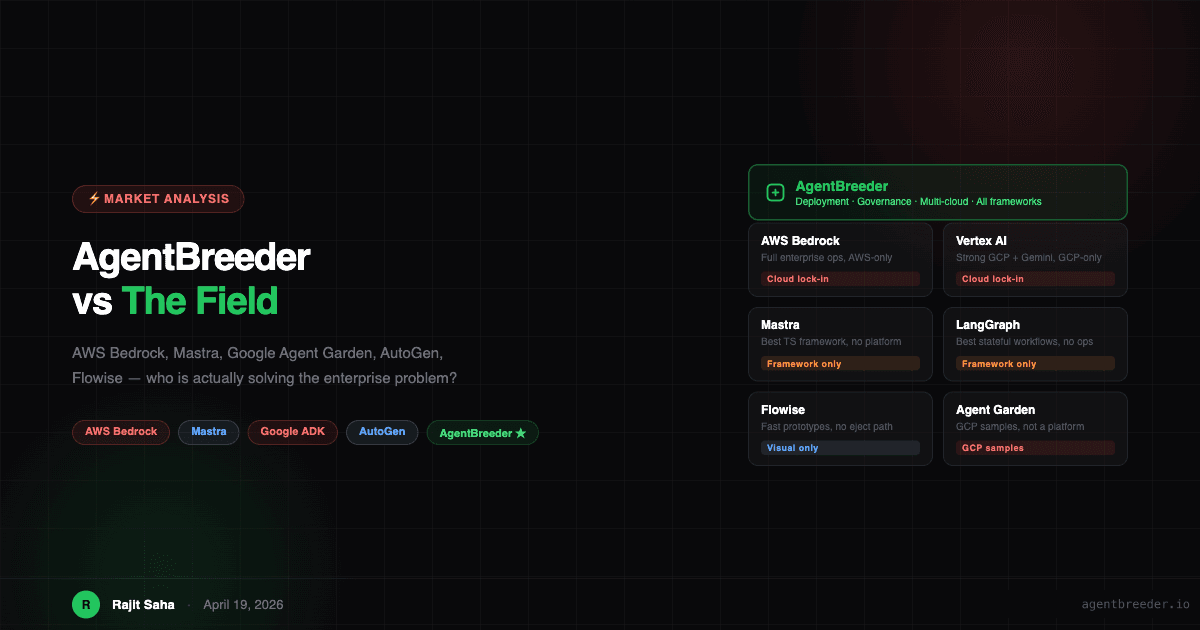

This post is an honest head-to-head. No vague feature comparisons — just a direct look at what each platform does well, where it runs out of road, and where AgentBreeder fits in a market that has never been more crowded.

The Market in 2026: More Tools, More Fragmentation

The enterprise AI agent market has divided into four distinct layers, and most products live in exactly one of them:

- Model layer — OpenAI, Anthropic, Google, AWS (Bedrock), Azure (OpenAI Service)

- Framework layer — LangGraph, CrewAI, Mastra, AutoGen, Google ADK, OpenAI Agents SDK

- Orchestration & workflow layer — n8n, Flowise, Dify, Agent Garden

- Platform & governance layer — AgentBreeder (and nobody else at scale)

Most of the noise in the market is happening in layers 1–3. The gap that enterprises consistently discover — governance, multi-cloud portability, org-wide shared infrastructure — sits in layer 4. That is the gap AgentBreeder was built to fill.

Head-to-Head: AWS Bedrock Agents

What it is: A managed service for building and running AI agents natively on AWS. Deep integration with Amazon S3, DynamoDB, Lambda, and the full AWS toolchain. Supports multiple foundation models through the Bedrock model catalog.

What it does well:

- Best-in-class AWS security integration (IAM, VPC, AWS CloudTrail audit)

- Managed runtime — no container ops required

- Strong integration with existing AWS infrastructure teams already have

Where it runs out of road:

- AWS-only, always. Your GCP team, your Databricks pipelines, your on-prem K8s cluster — all excluded. Bedrock Agents cannot deploy there, period.

- Agent logic is proprietary. You define agents through AWS console or CDK. There is no portable format you can take elsewhere. Migrating off Bedrock means rewriting.

- No shared org registry. There is no mechanism to share prompts, tools, or RAG indexes across teams within your organization. Each team reinvents the wheel.

- No framework choice. You use Bedrock's agent loop, Bedrock's memory, Bedrock's action groups. LangGraph and CrewAI users are not welcome.

AgentBreeder vs Bedrock: If your entire stack is AWS and will remain AWS forever, Bedrock is genuinely excellent. If you have one team on GCP, one team who wants LangGraph, or any desire to avoid permanent AWS lock-in, Bedrock is a strategic risk. AgentBreeder deploys to AWS ECS, EKS, Lambda and GCP Cloud Run and Azure and Kubernetes — all from the same agent.yaml.

Head-to-Head: Mastra

What it is: An open-source TypeScript framework for building AI agents, RAG pipelines, and workflows. Built by the team behind Gatsby (formerly Netlify). TypeScript-first, with strong abstractions for memory, tools, evals, and agent-to-agent communication.

What it does well:

- The cleanest TypeScript-native agent API in the ecosystem

- First-class RAG, memory, and evaluation support out of the box

- Great developer experience for TypeScript teams who want to stay in TypeScript

- Genuinely open-source with an active community

Where it runs out of road:

- Mastra is a framework, not a platform. It gives you excellent primitives for building agent logic. It does not give you deployment, RBAC, cost attribution, org-wide registries, or multi-cloud portability.

- You still need to solve the ops layer yourself. After you build your Mastra agent, you containerize it, deploy it, wire up observability, set up access control, and build cost tracking. Mastra does not help with any of that.

- TypeScript-only. Your Python ML team, your Java backend team — they cannot use Mastra. The framework choice forces language choice.

- No governance primitives. There is no concept of "which team owns this agent," "what does this agent cost per day," or "who changed the system prompt last Tuesday."

AgentBreeder vs Mastra: These products are not really competing. Mastra is a framework for writing agent logic. AgentBreeder is the platform that deploys Mastra agents (TypeScript SDK support) and governs them in production. You can use both — and you probably should if your team is TypeScript-first. Write your agent in Mastra, deploy and govern it through AgentBreeder.

Head-to-Head: Google Agent Garden

What it is: Google's open collection of reference implementations, sample agents, and templates for building with Google ADK and Gemini models. It is a library of patterns and examples, not a deployable product.

What it does well:

- Excellent starting point for teams building on GCP and Gemini

- Strong multi-agent and A2A communication examples

- Well-maintained by Google's DevRel team with real production patterns

Where it runs out of road:

- Agent Garden is samples, not infrastructure. There is no runtime, no governance, no deployment pipeline. It is documentation in code form.

- GCP and Gemini assumed throughout. Every pattern assumes you are deploying on Cloud Run, using Vertex AI, and calling Gemini. Teams on AWS or Azure cannot transplant these patterns without significant rewriting.

- No production operations story. After you take a sample from Agent Garden and build on it, you are on your own for deployment, scaling, secrets management, access control, and cost tracking.

AgentBreeder vs Agent Garden: Google ADK (which Agent Garden samples) is a first-class supported framework in AgentBreeder. You can take an Agent Garden pattern, wrap it in an agent.yaml, and deploy it to GCP Cloud Run or AWS or Azure without touching the agent code. AgentBreeder gives Agent Garden patterns a production deployment story.

Head-to-Head: Vertex AI Agent Builder (Google)

What it is: Google's managed platform for building and deploying conversational agents on GCP. Tighter integration with Dialogflow CX, Search, and the full Google Cloud ecosystem.

What it does well:

- Integration with Google Search grounding and BigQuery

- Strong no-code builder for conversation flows

- Enterprise GCP contracts, compliance, and data residency

Where it runs out of road:

- Same structural limitation as Bedrock: GCP-only, Google-hosted, no portability

- Limited framework flexibility — you use Vertex's agent loop, not LangGraph or CrewAI

- Pricing is tied entirely to GCP usage

Head-to-Head: Microsoft AutoGen + Semantic Kernel

What it is: Two open-source projects from Microsoft Research. AutoGen is a multi-agent conversation framework. Semantic Kernel is a higher-level orchestration library with plugins, planners, and memory. Both run anywhere Python runs.

What it does well:

- AutoGen's multi-agent conversation patterns are industry-leading for research workloads

- Semantic Kernel has mature Azure OpenAI integration and .NET/Java/Python SDKs

- Genuinely portable — not tied to Azure

Where it runs out of road:

- Both are frameworks, not platforms. Same gap as LangGraph and Mastra: excellent for building agent logic, silent on deployment, governance, and multi-team coordination.

- AutoGen can be operationally complex for production workloads — it was built for research-grade multi-agent experiments, and production hardening is left to you.

- Semantic Kernel's enterprise story leads with Azure, creating soft lock-in even when the code is portable.

Head-to-Head: Flowise and Dify

What they are: Open-source low-code / no-code platforms for building AI workflows and agents visually. Popular for quick prototyping, internal tools, and teams without Python expertise.

What they do well:

- Fastest path from idea to working agent prototype

- Large libraries of pre-built connectors, LLM nodes, and tool integrations

- Self-hostable, active communities

Where they run out of road:

- The visual canvas is the ceiling, not the floor. When engineering teams want to move to production-grade code, there is no ejection path — you rebuild.

- No RBAC, no cost attribution, no org-wide registry of reusable components

- The deployment story is self-managed Docker — you operate it yourself

- No path to multi-cloud; wherever you self-host, that is where it runs

AgentBreeder vs Flowise/Dify: AgentBreeder's No-Code builder serves the same audience but has a built-in eject path — no-code generates the same agent.yaml that engineers can version-control, modify, and extend in full code. Nothing is thrown away when you outgrow the visual canvas.

The Positioning Map

Here is where every major player sits across two dimensions: portability (vendor-locked vs truly cloud and framework agnostic) and enterprise readiness (developer tool vs production platform with governance).

Market Positioning

Portability vs Enterprise Readiness — where every major player sits

The top-right quadrant is enterprise-portable: high governance and high portability. That is the gap the entire market has left open. AgentBreeder is the only open-source platform built specifically for that quadrant.

What AgentBreeder Is — and What It Is Not

The comparisons above reveal a pattern: most platforms are excellent at one layer and silent on every other. The product category confusion is real. Here is a precise definition.

Positioning

What AgentBreeder is — and what it deliberately is not

AgentBreeder IS

A deployment & governance layer

AgentBreeder sits above every framework. It packages, deploys, and governs agents regardless of how they were built.

Framework-agnostic infrastructure

LangGraph, CrewAI, OpenAI Agents, Google ADK, Claude SDK — all deploy through the same pipeline, same governance, same registry.

Multi-cloud by default

AWS, GCP, Azure, Kubernetes, or local Docker — one agent.yaml, any target, no rewrites.

An org-wide shared registry

Agents, prompts, tools, RAG indexes, and MCP servers are versioned, discoverable, and reusable across every team.

Governance as a side effect

RBAC, cost attribution, audit log, and observability inject automatically at deploy time — never bolt-on, never skippable.

A builder for every skill level

No-code (visual canvas), low-code (YAML), and full-code (SDK) — all compiling to the same internal format.

AgentBreeder IS NOT

Not a new agent framework

AgentBreeder does not replace LangGraph, CrewAI, or any other framework. It deploys them. Your team keeps the tools they know.

Not a single-cloud service

Unlike AWS Bedrock or Vertex AI, AgentBreeder has no cloud allegiance. There is no lock-in at the infrastructure layer.

Not a model provider

AgentBreeder does not host or serve LLMs. It routes to whatever model your team selects — Claude, GPT-4o, Gemini, Llama, or a fine-tune.

Not a Python-only platform

Unlike most agent frameworks, AgentBreeder supports both Python and TypeScript — because your teams are not all Python shops.

Not a research project

AgentBreeder is production infrastructure. It is opinionated about governance, deployment, and reliability — not just experimentation.

Not another no-code-only wrapper

The no-code builder produces the same agent.yaml as the full-code SDK. Nothing is hidden, simplified away, or dumbed down for engineering teams.

Why the Comparison Misses the Point

When enterprises evaluate "AI agent platforms," they often compare them as if they are all trying to do the same thing. They are not.

AWS Bedrock, Vertex AI, and Azure AI Studio are cloud provider AI services — they lock you in by design, because lock-in is their business model.

LangGraph, CrewAI, Mastra, and AutoGen are developer frameworks — they give you excellent primitives for building agent logic but are deliberately silent on deployment and governance.

Flowise and Dify are visual builders — they optimize for speed of creation but have hard ceilings on engineering complexity and production operations.

AgentBreeder is platform infrastructure — it is the layer that sits above all of the above. It is the answer to: "We have teams using LangGraph, CrewAI, and Google ADK. We deploy to AWS and GCP. We need RBAC, cost tracking, and a shared registry of tools and prompts. How do we govern all of this without mandating a single framework?"

The answer is: you do not mandate a framework. You add a governance layer that works with all of them.

That is what AgentBreeder is built to do.

Try AgentBreeder

Open-source, Apache 2.0. Works with every framework you already use.

pip install agentbreeder

agentbreeder init

agentbreeder deploy --target local- Getting started guide

- Migration guides from LangGraph, CrewAI, and more

- The full agent.yaml specification

- GitHub

Rajit Saha is the founder of AgentBreeder. The comparisons in this post reflect capability scope as of April 2026 — all frameworks and platforms are evolving rapidly.